Blogs

2026 Trust and Safety Predictions: Using AI for Fraud Detection and Prevention

11 December 2025

By Byron Fernandez

TDCX Group CIO and EVP

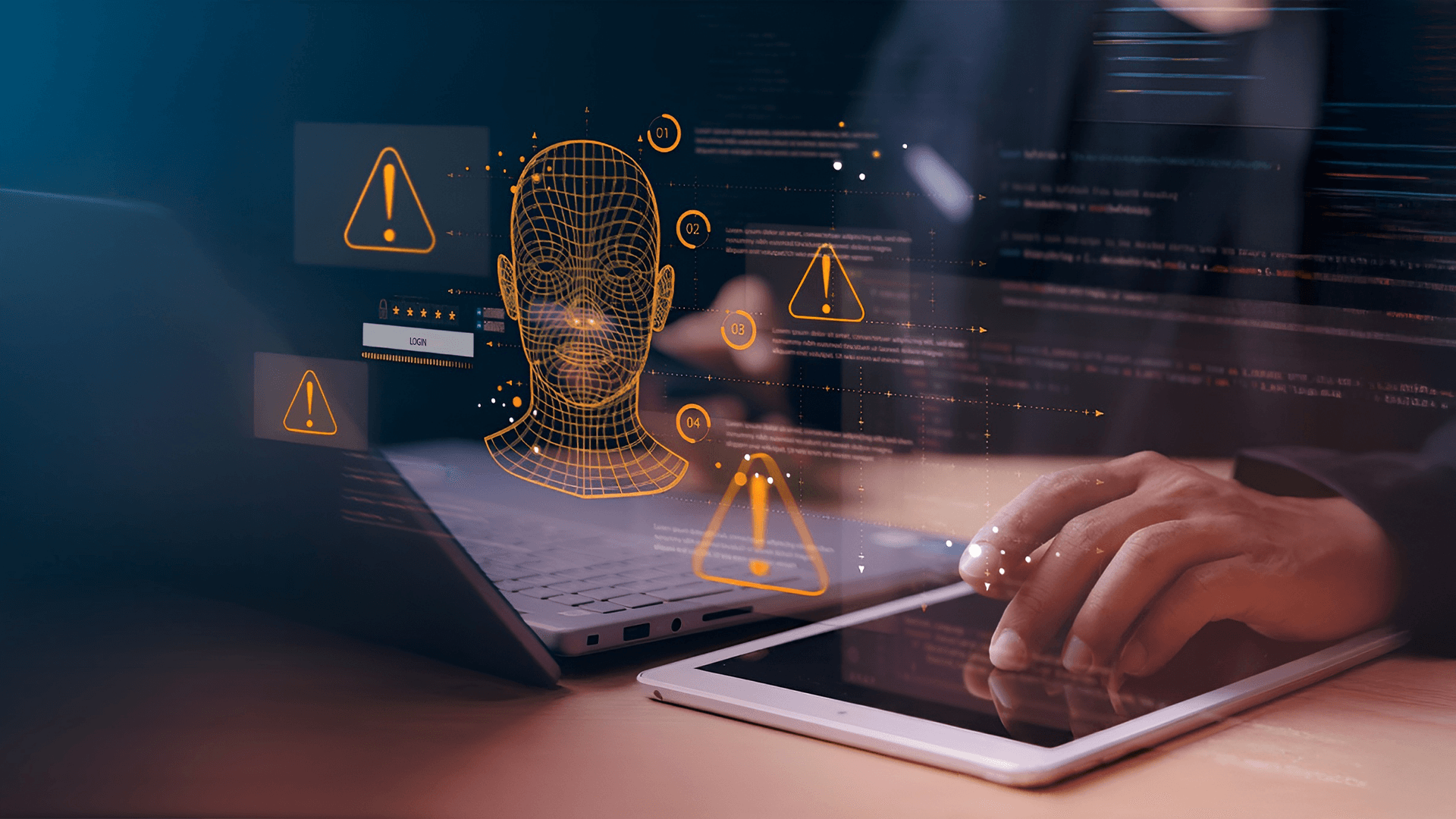

AI, generative AI (GenAI), and now agentic AI have scaled fraud to machine speed, with analysts expecting global losses to climb to US$40 billion by 2027. What used to be opportunistic or seasonal has become continuous and computational: Selfie fraud rose 73% year over year in Asia Pacific, facial recognition evasion surged 60% in Latin America, deepfake incidents jumped 237% in the US, and synthetic identities grew by more than 160% across Europe.

What does this mean to industries in banking, FinTech, and the digital economy at large? Rising compliance costs, growing identity risks, and an erosion of digital trust in a time when seamless digital experiences are the baseline expectation. When texts, voices, images, documents, and even decisions can be generated or manipulated by machines, fraud becomes agentic, autonomous, and adaptive.

Exposure won’t just come from fraudsters misusing AI, but also from customers using it in their daily lives. Next year, at least 30% of consumers are expected to rely on GenAI tools to make financial and healthcare decisions. By 2030, half of all customer service requests will come from AI agents transacting on behalf of human customers.

The enterprise’s perimeter will also fracture from the inside. By 2028, one in four candidate profiles will be fake, fueled by AI-generated résumés and deepfakes. Beyond the workforce, over two-thirds of leaders warn that the unchecked proliferation of falsified third-party identities will be a top vector for AI-driven fraud slipping into their operations.

All these elevate identity as 2026’s currency for trust and safety. Every interaction where someone (or something) asserts who they are becomes a potential fault line. However, many of these checkpoints are made for static, traditional verifications that machine-generated deception already outruns.

This is also why I think that next year, we’ll see a more intense arms race between AI-powered defenses and AI-enabled fraud, with customer experience (CX) as their center of gravity. It’s where identity is asserted, intent is expressed, friction surfaces, and anomalies first show up. A CX-focused approach to trust and safety, especially for fraud detection and prevention, is the fortification that enterprises need to preserve trust as a business asset.

The rise of AI-driven identity fraud needs a CX-led approach to detect and prevent it

Synthetic identities built from AI-generated documents, deepfake biometrics, and profiles assembled from disparate digital breadcrumbs are set to overtake traditional identity theft next year. In the US alone, losses are projected to reach US$23 billion.

This shift from stolen to engineered will change how companies onboard, authenticate, and verify customers. Fraud will increasingly appear as spikes in “intent volume,” or rapid attempts to trigger or manipulate transactions before a human can intervene. GenAI lowers the barrier, what with more than 350 voice-cloning tools already existing and the rise of GenAI-assisted shopping further exposing customers to risks.

This extends to enterprises. One in three companies across aviation, banking, FinTech, healthcare, and telecommunications already contend with AI-driven impersonation. A contractor accessing a ticketing platform or a third-party partner logging into an operations portal can become the conduit that fraudsters can hijack.

With CX and customer service (CS) organizations expected to wrestle with 300% more fraud attempts in the next two years, it’s no surprise that investment in deepfake detection technologies will jump nearly 40% in 2026. While heavier, more invasive controls are a valid next step, the churn could outweigh the ROI if they’re poorly designed. Nearly 80% of customers abandon purchases when checkout feels difficult or time-consuming, and one in three drops off due to complex forms or forced verification.

Leaders should adopt a CX-first approach that informs how and where identity assurance can be reinforced without slowing the customer journey down:

- Treat identity as a journey, not a checkpoint. Apply progressive, context-based verification to minimize friction for legitimate users while still checking for meaningful risks. This helps preserve speed without compromising security.

- Enable human-AI collaboration. AI can handle scale, pattern recognition, and anomaly detection while humans interpret intent, emotional cues, and escalation dynamics. Together, they can catch AI-fied identities before the CX breaks.

- Redesign verification for dynamic environments. Verification must be able to pause, validate, and seamlessly resume, which enables interventions without forcing users or customers into rigid resets or abandoned sessions.

- Position CX as a line of defense. Identity-engineered fraud often appears as noise, such as unusual urgency, AI-like responses, confusion after interacting with a fake agent, or abrupt know-your-customer (KYC) changes. Analytics-enabled CX teams can turn these indicators into proactive, actionable intelligence.

- Extend identity assurance across partners. Applying consistent identity standards closes one of the most overlooked gateways for AI-generated fraud.

Agentic insiders and hiring fraud will compel enterprises to expand controls from knowing the customer to knowing the machines

The same AI agents and AI-generated identities reshaping consumer fraud have also entered the workforce, partner ecosystems, and the machine-operated layers of the enterprise.

News of deepfake applicants flooding the job market and slipping past recruiters this year already give a glimpse of how hiring fraud will unfold next year. A single hiring platform, in fact, blocked over 75 million AI-based face-spoofing attempts in the past year. Motivations vary, but hiring is now mainstream channel for fraud.

Insider threats won’t just be humans, too. Today’s modern enterprise is increasingly operated by machines acting like employees. Non-human identities (NHIs), which include service accounts, APIs, authentication tokens, automation workflows, and AI-driven processes, grew 44% year over year. For every one human employee, there are 144 NHIs behind the scenes identifying, authenticating, and authorizing devices, IT systems, and business processes. That includes issuing or approving actions, accessing customer data, moving funds, and escalating cases, to name a few. Imagine what can happen when deepfakes figure into the equation.

Whenever machine identities rely on human or biometric verification, AI-generated deception can pass through controls not designed to detect synthetic impersonation. This is what cybersecurity experts predict about the rise of “agentic insiders,” where compromised AI agents and deepfakes conduct unauthorized activities.

When engineered people and exploitable machines collide, the impact lands directly on how employees work and how customers are served. Leaders should adopt a multilayered framework for understanding how these identities interact with the business:

- Know your employees (KYE). Verify the human behind the paperwork and throughout their lifecycle. Continuously validate behavioral patterns, device trust, geolocation consistency, and access usage not only during hiring but every time their roles or permissions change.

- Know your business (KYB). Apply the same rigor used for employees to vendors, suppliers, and contractors. Enforce zero-trust principles across these relationships so that access, credentials, and privileges are validated every time they interact with the company’s systems.

- Know your machines (KYM). Maintain an up-to-date inventory of NHIs and define what each is allowed to see or do. Continuously monitor machine-initiated actions for abnormal patterns to ensure that automation doesn’t become an unguarded entry point.

- Know your experience (KYEx). Use customer and employee journeys as actionable intelligence not only for reinforcing fraud detection and prevention, but also for reducing friction in the user experience (UX) of the company’s identity controls.

- Know your AI (KYAI). Establish governance and provenance for AI systems and agents. Define clear boundaries for what they can access or initiate, log and audit their decisions, and monitor for behavioral drift or attempts at manipulation.

TDCX turns AI-fication into strategic advantage in fraud detection and prevention

AI-fication is reengineering identity into an interplay of human and nonhuman actions that can enrich or disrupt our daily lives. This might make the road ahead feel uncertain, but it also reveals a clearer path forward. The same technologies that can be abused to accelerate fraud can also sharpen our visibility into where people or machines veer off course. They can show the stretches where the deviations harden into roadblocks, and where clearing them prevent the obstructions from reaching employees, customers, and the bottom line.

TDCX operates at this convergence. Fraud might first register in a security console, but its consequences unfold in the experience — a job candidate whose responses don’t feel human, refunds and chargebacks to a nonexistent customer, an agentic copilot acting with no supervision. These are the terrains we navigate every day across our global campuses, with CX-led data intelligence and human expertise as the engine that keeps our journey steady. In fact, we’ve helped enterprises harness that same synergy across their CX portals, support platforms, and customer apps by testing their bandwidth, uncovering technical blind spots, and mapping where failures would be felt by customers or employees.

Indeed, our approach has distinguished TDCX across the industry, from operational resilience and service excellence to trusted data stewardship. These recognitions affirm our strength in safeguarding the identities, integrity, and interactions that enable the future of the businesses we work with.