Blogs

Chatbot Mystery Shopping: After All the Rage in AI and GenAI, Has Anything Changed in CX?

2 March 2025

By Lianne Dehaye (TDCX AI Senior Director)

and Ashley Yue (Assistant AI Client Solutions Manager)

Smarter automation. Seamless self-service. Personalized interactions at scale. AI and generative AI (GenAI) weren’t just upgrades to customer experience (CX). They were supposed to redefine it.

The promises were compelling. It’s faster, more intuitive, more human, infinitely scalable. Businesses bought in. By 2028, banks alone will pour US$18.4 billion into AI-powered customer support and self-service capabilities. Meanwhile, 92% of telecommunications leaders and 65% of travel and hospitality executives are banking on GenAI to power their chatbots and virtual assistants.

It wasn’t just isolated adoption. Next year, more than 80% of enterprises are expected to have deployed GenAI-enabled applications — up from just 5% in 2023 — while 90% of companies globally are projected to have GenAI as a workforce partner. AI-powered chatbots, in fact, already handled 42% more retail transactions than they did a year ago. In the US, 63% of travelers engaged with AI chatbots to plan their trips.

The numbers make it seem inevitable. Except, it didn’t happen that way. Somewhere between the AI-first vision and the reality of customer interactions, a different story emerged.

The trough of disillusionment: Is AI for CX overpromised, underprepared?

A year ago, analysts predicted that 85% of all customer service interactions this year would be AI-driven. In reality, the same experts recently found that a mere 8% of customers had actually used a chatbot in their last experience with customer support. Only 14% of customer issues were resolved through self-service, and 64% of customers preferred that companies would stop using AI altogether.

What we witnessed was the classic hype cycle. Businesses bought into the vision of AI-powered CX, an innovative concept that promised a new experience. However, on its first run, the technology showed promise but also revealed growing pains. Users found chatbots that hallucinated, virtual assistants that collapsed outside a scripted flow, and AI systems that still couldn’t grasp context, emotion, or nuance.

It even went so far with a single Gen AI-powered virtual assistant costing between US$5 million and US$6.5 million to roll out. It’s a hefty price tag for a solution that, more often than not, still escalates customers to human agents for resolution. It’s no surprise, then, that analysts and experts tempered their forecasts. By the end of 2025, 30% of current GenAI projects is estimated to be abandoned.

Even with the hurdles, executives aren’t giving up on AI. Nearly 60% of the world’s top 2,000 companies still rank AI and GenAI as their top technology priorities for CX.

While optimism and enthusiasm remain strong, expectations are changing: 66% of executives said they’re either ambivalent with their progress. Half even admitted they don’t have clear investment priorities. Spend on AI infrastructure has doubled, yet many are still waiting for tangible breakthroughs.

It’s not a question of whether AI can deliver, but when and how. Businesses that rushed to adopt AI are now realizing that it’s not a panacea. It needs a smarter prescription for implementation, a healthy dose of human-AI synergy, and a reality check. Among surveyed companies, for example, only less than 30% of their current AI and GenAI experiments will be fully scaled. Meanwhile, companies that figure humans into the equation are starting to see meaningful, sustainable gains.

Now, agentic AI and even DeepSeek are entering the spotlight. As AI further evolves beyond scripted automation, it raises questions anew. How will customers engage with AI-powered self-service? Will it improve the quality of service and reduce customer effort — or introduce new pain points?

These were the questions we set out to explore with our own mystery shopping exercise. We tested AI-powered chatbots across multiple brands in various industries. What we found paints a clearer picture of where AI in CX is working now. AI chatbots are indeed better than before — but for most, “better” doesn’t mean good enough. Some industries made noticeable progress, but many chatbots still fall short in the most fundamental aspects of customer interaction. Their future success depends on how well they can harness this technology to bridge the gap between their customers’ needs and meaningful business value.

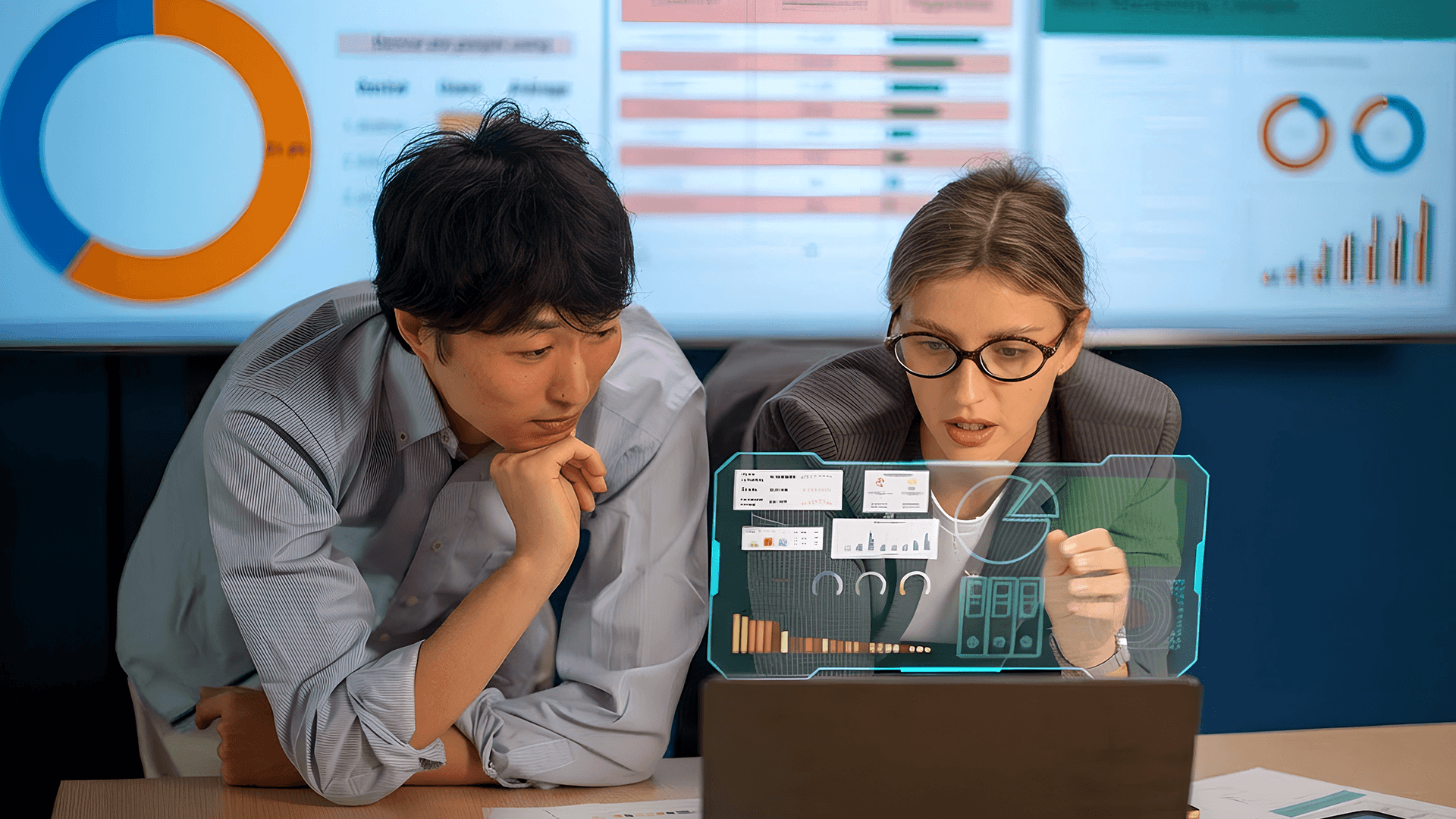

Aptitude for conversation: AI chatbots are talking more, but are they actually listening?

A chatbot can reply, but can it actually hold a conversation? One of the three key areas we assessed was aptitude for conversation — not just whether a chatbot could generate responses, but whether it could listen, understand, and adapt:

- Did the chatbot greet users in a personalized greeting and offered to help?

- Could it process natural language queries, or did it only recognize specific keywords?

- Did it understand spelling variations, synonyms, and acronyms?

- Was it effortless to start a conversation, or did users struggle to interact?

Across all industries, the chatbots scored 73%. It’s a sign of progress, bringing us closer to bridging the gap between expectation and execution. While customers don’t expect poetic brilliance, they need AI to understand basic variations of common requests.

Where did they improve?

- Better handling of natural-language queries: The shift from rule-based bots to a more dynamic, conversational AI made interactions feel smoother.

- Conversations easier to initiate: Most chatbots now accept simple “hi” messages or provide preset prompts to help customers engage with them.

Where do they still struggle?

- Understanding linguistic nuances: Many chatbots still force customers to reword their queries multiple times before getting a relevant response.

- Retaining context in longer conversations: The chatbots frequently reset the dialogue, leading to repetitive exchanges.

Figure 1. Some chatbots made exchanges feel less robotic, but struggled with understanding synonyms, spelling variations, and acronyms.

Chatbots in the education industry ranked the highest in conversational aptitude. While they might not be particularly advanced, their advantage comes from predictability. Use cases such as student inquiries, coursework-related questions, and FAQ-style support follow structured, well-defined formats. It’s a narrower playing field with fewer surprises, making them relatively easier to train.

Banking, government, and tech chatbots ran hot and cold — sometimes competent, other times off the mark. They could be struggling with financial terminology or compliance-heavy responses, while the nature of bureaucratic processes could make it difficult for the chatbot to handle more complex messages. Troubleshooting tech issues also often require these chatbots to retain context over multiple interactions.

Telco, travel, e-commerce, and retail chatbots had lower scores. It’s possible that the companies are still using models that haven’t evolved to handle dynamic conversations. Initiating one about issues like billing disputes, plan modifications, and network troubleshooting often involves high effort that their AI chatbots might still be unprepared for.

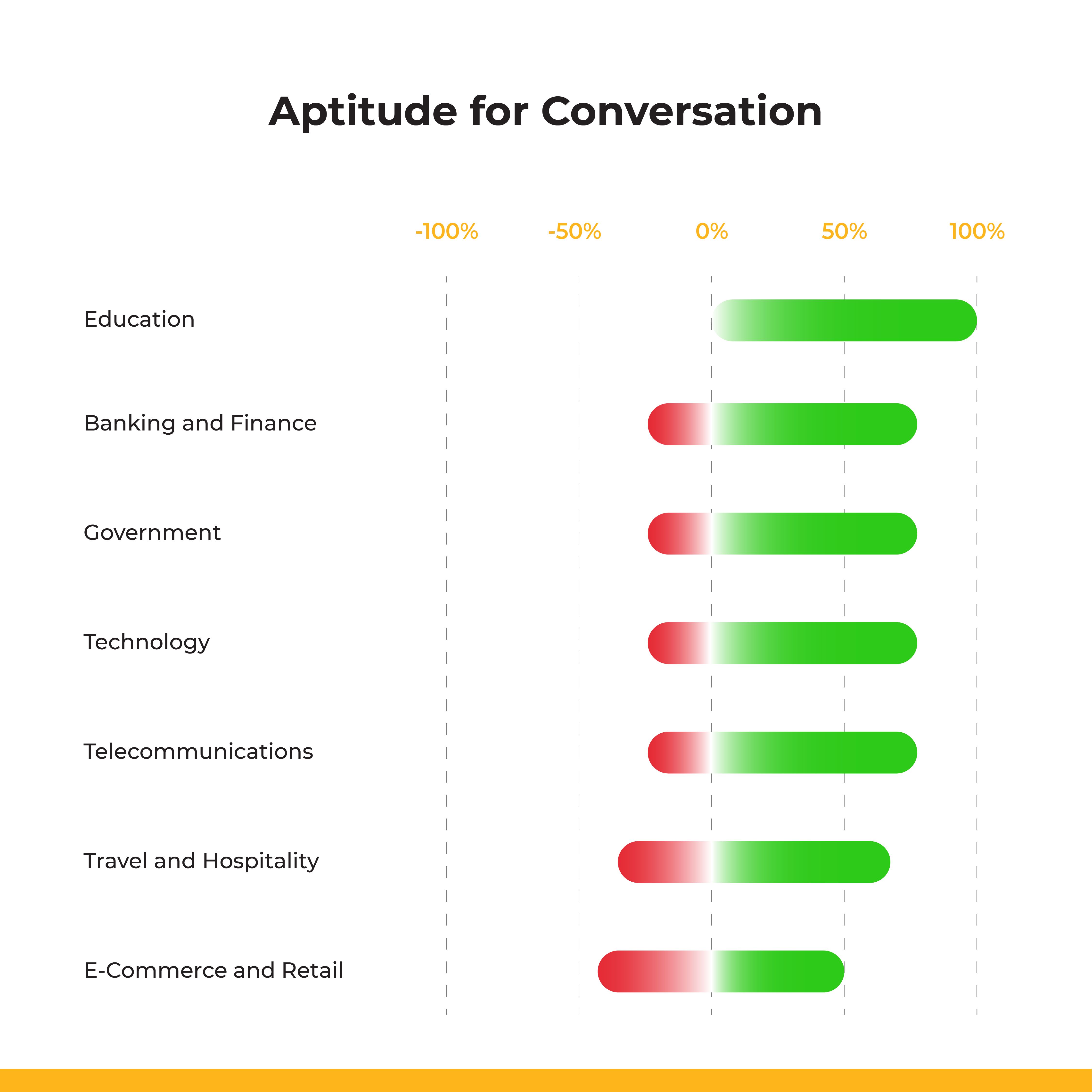

Chatbot effectiveness: Can they solve problems, or just start conversations?

Talking is easier now for chatbots, but can they solve problems? To measure this, we evaluated chatbot effectiveness based on five criteria:

- Was it easy to find details about products or services through the chatbot?

- Did the chatbot’s answers directly address the customer’s query?

- Was the chatbot able to perform the expected task?

- Did the responses contain spelling or grammatical errors?

- Was the issue fully resolved to the customer’s satisfaction?

Across industries, effectiveness scored just 60%, a clear opportunity for growth and refinement. AI-powered interactions might sound better, but not necessarily work better. It appears that the industries seeing the most success are the ones that match AI’s strengths to well-defined, structured problems. In industries where customer issues are multistep, emotionally charged, or require high levels of precision, chatbots could still face challenges.

Where did they excel?

- Easier access to information: Navigating product details and brand FAQs is easier, with fewer clicks and less effort.

- Improved grammatical and spelling accuracy: The chatbot responses contained fewer linguistic errors, signaling higher precision in text generation.

Where did they fall short?

- Task execution: Many chatbots struggled to perform transactions or specific requests.

- Resolution rates: Interactions still required escalation to human agents due to incomplete or inaccurate solutions.

Figure 2. Interactions that are more predictable seem more ideal for AI-driven automation in chatbots, as it doesn’t leave much room for ambiguity.

E-commerce and retail chatbots stood out as the most effective. This could be because customer interactions, such as order tracking, refunds, and product recommendations, tend to follow predictable workflows. Customers aren’t likely to expect deep, existential conversations, and would prioritize speed, accuracy, and completion. Education, banking, finance, government, and technology chatbots also performed reasonably well, which might be due to their ability to retrieve predefined responses quickly.

Where chatbots struggled was in handling complexity and emotional nuance. In education, the AI chatbots might’ve faltered with complex administrative or academic concerns. In banking and government services, the chatbots seemed to struggle with compliance-heavy or security-sensitive requests, areas where precision is also legally required. The same mixed results were seen in travel and hospitality, as some requests , such as itinerary changes, cancellations, and refund disputes, required more personalization.

At the bottom of the scale are telco chatbots. Billing disputes, contract modifications, and troubleshooting require deeper understanding, and an inability to retain context and process multistep resolutions likely contributed to the poor performance.

The nature of customer interactions shapes where AI chatbots work best. When complexity, ambiguity, or deeper reasoning comes into play, cracks start to show. While AI has made strides in automation, multistep problem-solving, contextual awareness, and emotionally charged interactions remain major hurdles.

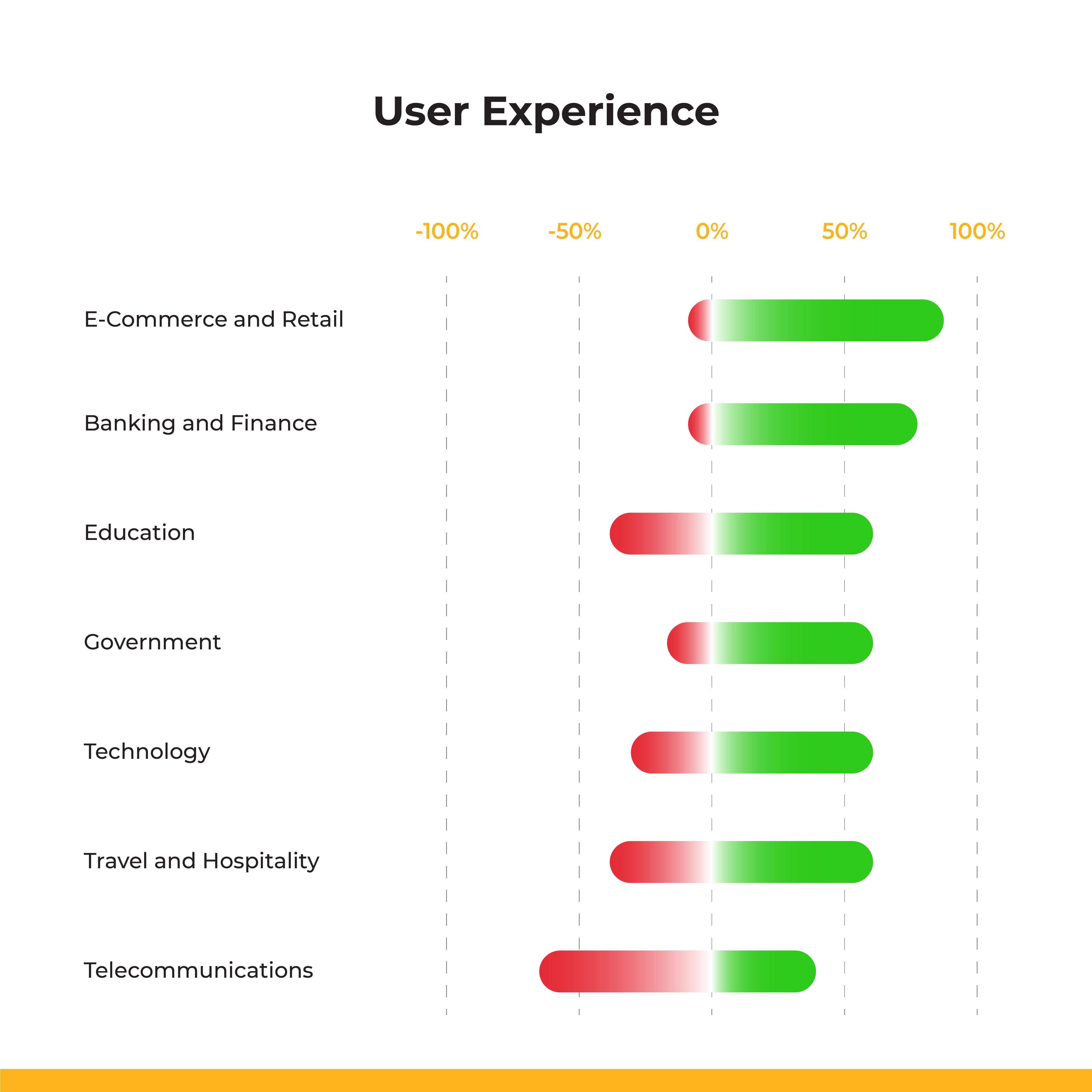

User experience: Can customers engage with AI chatbots without friction and frustration?

A chatbot can hold a conversation and even solve problems, but if the experience of using it is frustrating, confusing, or inefficient, customers won’t stick around long enough to see what it can do. User experience (UX) is often the clincher between adoption and avoidance. A chatbot that’s difficult to use — or worse, one that creates more problems than it solves — isn’t just a bad tool. It’s a business liability. We evaluated chatbot UX based on five key factors:

- Did the chatbot provide clear, concise guidance, or was navigation frustrating?

- Were there glitches, session timeouts, or disruptions that broke the experience?

- Was the chatbot easy to find, access, and engage with?

- When the chatbot couldn’t resolve an issue, did it offer a seamless handoff to a live agent?

- Did the chatbot work fluidly across platforms and systems?

Chatbot UX scored 66% overall — better than their effectiveness but still far from frictionless.

What were their signs of progress?

- Straightforward accessibility: Many chatbots were easily discoverable, often appearing as screen pop-ups or persistent chat widgets.

- Session memory: Some remembered chat history across multiple browser windows, preventing customers from having to restart conversations.

What was their room for improvement?

- Lack of escalation: When chatbots couldn’t resolve an issue, they often failed to connect to live support.

- Poor integration: Many chatbots failed to connect with email, meeting schedulers, or customer relationship management (CRM) systems, forcing customers to manually re-enter information.

Figure 3. Telco AI chatbots show that poor conversational ability and weak problem-solving have a direct impact on how customers feel about the experience.

E-commerce and retail chatbots received the highest UX ratings, likely because they’re designed for efficiency. Customers want fast, frictionless transactions. Many chatbots use quick-select buttons instead of text input, reducing open-ended inputs and minimizing errors.

UX in banking, finance, education, government, and tech, UX was more inconsistent. Navigating multiple, excessive steps dents the chatbot’s usability, turning the interaction into a tedious chore.

The most frustrating UX issues surfaced in travel, hospitality, and telecommunications. These industries rely on back-end integrations that pull from customer data, transaction histories, and service records, sometimes in real time. When chatbots struggle to retrieve information from multiple disconnected databases, customers face lag, incomplete responses, or even abrupt session failures.

Data silos, cross-platform inconsistencies, and technical debt make chatbots clunky or outright unusable. Without a seamless infrastructure, even the most advanced AI can’t compensate for an obstacle-ridden customer journey where engaging with the most “intelligent” chatbots feels anything but.

The future of AI in CX: With agentic AI on the horizon, what’s next?

Businesses are chasing AI’s latest shiny object, agentic AI. It’s designed to think, plan, and execute tasks on its own. It’s AI that doesn’t just answer questions but anticipates problems, resolves issues, and orchestrates CX autonomously.

Tech giants claim to have it in their offerings already. Experts even predict that by 2028, AI agents will handle 20% of interactions in digital stores designed for humans and will be embedded in 33% of enterprise applications. A third of executives already rank it as their top technological trend for their upcoming data and AI initiatives.

The vision is compelling. But if the ambitious pitch sounds familiar, it’s because it was how the last wave of AI innovations was hyped.

Agentic AI isn’t just an upgrade. It requires businesses to rethink implementation from the ground up. Moving from predefined scripts to AI agents capable of independent decision-making means companies need investments and expertise in infrastructure, data integration, and AI engineering. It’s why three out of four companies are expected to fail in their attempts. Even in an ongoing study that benchmarks large language model (LLM) agents, the best-performing agent succeeded at real-world tasks only 45% of the time.

Most AI agents are still in development. Despite what marketing suggests, they can’t book your vacation, resolve a billing dispute, or process a product return without human intervention — not yet at least. Even if they could, AI systems operating without human oversight raise critical concerns about bias, accountability, and regulatory compliance.

So, if not now, when?

Our mystery shopping demonstrated that AI-driven CX isn’t just about making chatbots more advanced. Businesses investing in AI-powered solutions shouldn’t be asking how to make their chatbot cool and sophisticated. They should aspire to an AI solution that helps customers without making their experience more frustrating. Customers don’t just want AI to sound smarter. They want it to actually work smarter. Case in point: customer doom loops — vicious cycles that trap customers into automated, canned responses.

Agentic AI, or any advanced LLM or AI solution, will only be as good as the data it operates on. The ability to make autonomous, intelligent decisions depends on how well businesses structure, label, and integrate data across systems. Designing for portability ensures that data can flow seamlessly across applications, systems, and support channels even if you upgrade to newer LLMs. However, this requires expertise in data labeling and transformation. Indeed, it takes hard work to make work and life easier.

So, has AI fundamentally transformed CX?

Yes and no. AI and GenAI are setting new standards in how businesses deliver CX. When not utilized effectively, they won’t deliver on their supposed promise to eliminate the core frustrations that drive customers to seek support in the first place.

AI and GenAI aren’t replacing humans just yet. Instead, we’ll see them evolve to become a better partner in navigating customer journeys. The companies seeing tangible success aren’t the ones chasing them for efficiency’s sake. They’re harnessing the technologies to make CX easier, more intuitive, and less frustrating. New innovations will come and go, but if businesses don’t have a solid foundation to move their CX strategy forward, they’ll keep circling the same roadblocks.