注目の記事

Trust and Safety in Gaming (Part 1): How Do Players Draw the Line Between Banter and Disruptive Behavior?

25 February 2025

By Ling Quek

VP of Trust & Safety (Global Client Solutions)

You logged into a game, expecting a solid match. Your team syncs up, and for a moment, everything clicks. Then, one missed shot changes everything. The chat turns sour. Unsolicited comments, dismissive remarks, and outright hostility flood your screen and headphones. You report the player, but nothing happens. The match ends, but the frustration lingers.

If moderation tools can’t keep up, what’s the point of reporting at all? Eventually, you stop playing altogether, leaving behind your battle pass and everything you’ve invested in the game.

You’re not alone. According to experts tapped by the ADL, new players drop off at a rate of 600% when their first experiences include negative player interactions.

Our own survey backs this up: Among 652 gamers across seven countries, 65% said they’ve quit or are likely to quit a game due to toxicity — player behaviors that disrupt, harm, or degrade the gaming experience for others.

Is leaving the only option? How can gaming companies adapt to regional expectations? If their current efforts don’t resonate with players, what’s the next move?

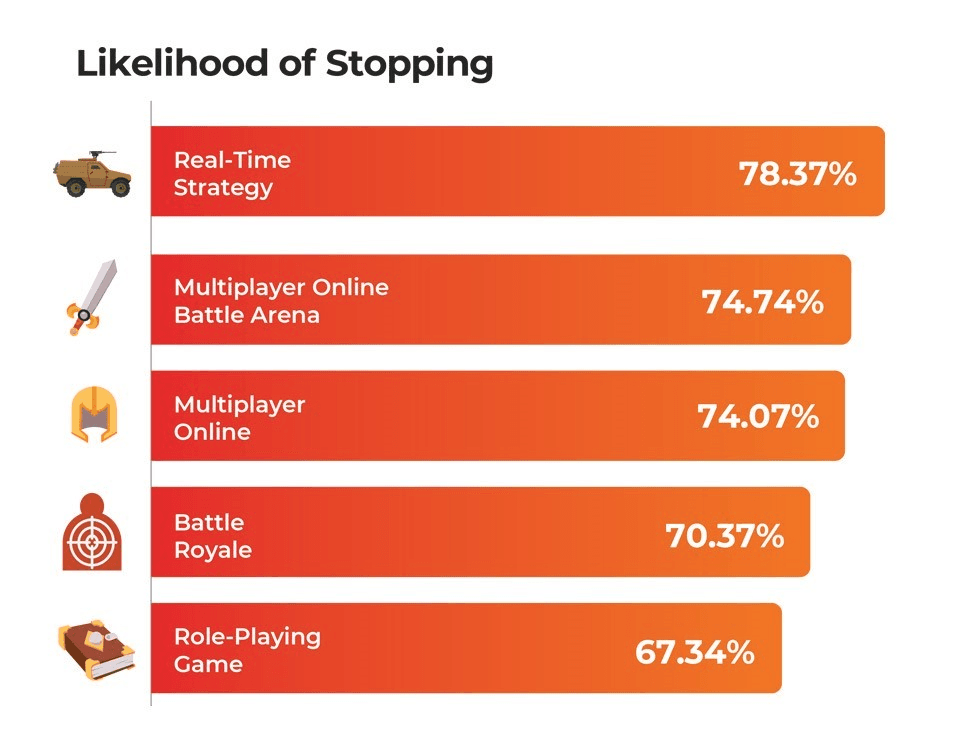

High-stakes competition and disposable social dynamics fuel negative behaviors

Our survey reinforces what many in the industry already know: High-interaction genres like multiplayer online battle arenas (MOBAs), massively multiplayer online games (MMOs), and first-person shooters (FPS) tend to have the most negative player interactions. On average, 73% of the players we surveyed said they were more likely to quit games in these genres due to concerns about negative behaviors from other gamers.

Why are unhealthy interactions cranked up in these kinds of games? A high-pressure, hypercompetitive environment with throwaway social ties could be making them run wild.

In single-player games, failures are internalized. In team-based, ranked environments, performance is a shared burden. That pressure fuels frustration, which is directed outward — it’s easier to flame someone else than to self-reflect, after all. For instance, a study of 26 chat logs from a Southeast Asian server of a MOBA found that flaming teammates for poor performance was the most common form of negative behavior. Another study of 782 gamers in East Asia linked perceived teammate dependence to increased hostility. Even one of the biggest multiplayer game developers found a direct correlation between game losses and harmful interactions. In fact, teams without disruptive players had a 54% higher chance of winning.

On the other hand, session-based games create a disposable social dynamic where every match resets with a new set of players, making long-term accountability almost nonexistent. In some socially interactive games, player reputation matters, as they’ll more than likely run into the same people again. In session-based games, players can act out, trash talk, and vanish without consequence. Studies on online disinhibition suggest that anonymity and invisibility fuel negative player behavior. In Finland and the Netherlands, for example, players are more likely act out when they don’t expect to cross paths with the same opponents or teammates again.

Among the 10 genres in our survey, the games with the highest player interaction had the highest quit rates due to disruptive behavior.

What crosses the line in gaming reflects regional expectations and cultural nuances

Nearly half of South Korean players in our survey believe gaming companies aren’t doing enough to make online spaces safer. Across South Korea, Germany, and China, players feel the companies’ efforts as too slow to make an impact. This aligns with the ADL’s findings that nearly 60% of adult gamers in the US support regulation for greater transparency in how disruptive behavior is handled.

Managing player interactions is as much about cultural nuance as it is about speed. Gaming experiences and player expectations aren’t universal. South Korean and Chinese players expect fast, top-down interventions, while German gamers demand transparency.

What disruption feels like can also depend on where you are, what game you’re playing, and who you’re playing with. A behavior that’s normal in one gaming culture might be a bannable offense in another. A study analyzing 11 million player reports and 6 million match logs from one of the world’s largest multiplayer online games (MOGs) revealed social behaviors unique to different regions. In South Korea, where collectivist values run deep, some behaviors are perceived as motivators for players to step up their game. In North America and Western Europe, the same actions are more likely to get flagged.

Additionally, an analysis of 1.5 million comment threads from 481 communities across six languages found that topics considered neutral in one language, such as Spanish and German, generated far more negative discussions in another. These differences aren’t just linguistic. They reflect deep-rooted cultural attitudes toward behavior, authority, and fairness.

What do these mean for gaming companies? Players can’t be managed the same way everywhere. Failing to account for these nuances erodes trust and, ultimately, drives people away from the game.

Scaling trust and safety in gaming takes more than just technology

Ensuring trust and safety in gaming isn’t just about catching bad behavior. It’s also about understanding why and how it happens. Even the most cutting-edge tools can struggle with context and intent, misread sarcasm, memes, and in-game lingo, which can lead to false positives. In multilingual environments, slang and cultural phrases could be wrongly flagged. Problematic players can also invent misspellings, coded language, or symbols to dodge detection. This was evident when a battle royale recently introduced new rules on in-game expressions, only for them to quickly find workarounds to troll.

The same AI-powered tool, rules, incentives, and punishments won’t work across every region. Gaming companies need regional intelligence not just for translation, but for cultural norms and enforcement expectations.

Then there’s scalability. If moderating one game server is hard, imagine doing it for millions of players across dozens of languages, each with different expectations for behavior. It’s no surprise that nearly half of decision-makers say scaling trust and safety is one of their biggest challenges. Even 89% of game developers said more solutions are needed. Among players, nearly half of those surveyed in the US, the UK, and South Korea suggested giving fellow players an active role in helping developers curb harmful behaviors.

The best gaming communities stay ahead when trust and safety measures level up with their players. That’s how TDCX’s “players-for-players” approach helped an industry-leading esports company in the US enhance its gaming experience while expanding into Southeast Asia, East Asia, and Latin America.

Eradicating disruptive, negative behavior in gaming won’t happen overnight, but that doesn’t mean developers have to sit back and take the hits. By combining technology, policy, and cultural awareness — and teaming up with the right partners — they can turn their player’s safety into a competitive advantage.

Click below for the full breakdown of our survey, including what players expect developers to do to improve their gaming experience